After waiting nearly a decade, a new console generation launched in the tail-end of 2013 to overwhelming enthusiasm. For the first time ever, two major consoles released not only in the same year, but in the same month as one another: Microsoft's Xbox One and Sony's PlayStation 4.

In the months leading up to the consoles' release, Microsoft famously changed its policies regarding the new Xbox system, which resulted in wariness from fans. But now, almost three years later, both consoles have sold tens of millions of units and are already looking to the future with new, upgraded systems.

But the thing is, there have been more than a few reports indicating that this generation will be the last console generation ever. Whether that's true or not is still yet to be seen (we doubt it). However, if Microsoft, Sony, and Nintendo are to have consumers buy into a new generation, gamers are going to need some guarantees.

Here are 15 Things We Need To See In The Next Generation Video Game Consoles.

15. Full 4K Support

Microsoft and Sony are currently both immersed in developing 4K compatible consoles: Microsoft with Project Scorpio and Sony with PS4 Neo, two names we're assuming are still placeholders. Due to the lengthy lifespan of the previous console generation, video gamers were anxiously anticipating a new generation of consoles which would deliver full-HD graphics with top-tier gameplay. Unfortunately, the advancements, while impressive in comparison to the previous generation, limit the consoles' capabilities, in particular with the advent of 4K televisions.

At the moment, 4K is what 1080p was ten years ago. It's the future, and we all know that -- but getting there will take some time. Sure, 4K televisions and 4K Blu-ray discs are currently available, with some games even supporting 4K resolution, but the overall video game industry will not adhere to a 4K standard for some years to come. But that doesn't mean console manufacturers should ignore the technology. In fact, if consumers are to buy into a new console generation, those consoles need to support everything a computer would. Otherwise, there will always be a sense of inferiority surrounding consoles.

14. Cross-Platform Gameplay

Despite all the advancements in the video game industry, players have wanted one thing that always seemed like a pipe dream: cross-platform gameplay; the act of playing a game on one platform with someone who is playing the game on another platform (e.g. playing Battlefield 4 on Xbox One with someone on PS4). To allow for cross-platform play would not only require the consoles to be compatible, but also require the manufacturers to work together -- not apart.

There are both advantages and disadvantages for allowing cross-platform play. Doing so would mean gamers would be able to choose whichever platform they prefer and still be able to play with their friends, no matter which platform those friends are playing on. However, therein lies the disadvantage. Console manufacturers would lose the exclusivity factor, indirectly forcing consumers to choose a platform that all of their friends have.

Still, cross-platform play is the future -- and Microsoft has proven that with their implementation of Xbox Play Anywhere, in which virtually all Microsoft-produced games will be cross-platform compatible with PC players. Additionally, Microsoft has said the Xbox One is now "ready" for cross-platform play with PS4. Perhaps the next generation of consoles will be genetically designed for cross-play.

13. Backward Compatibility

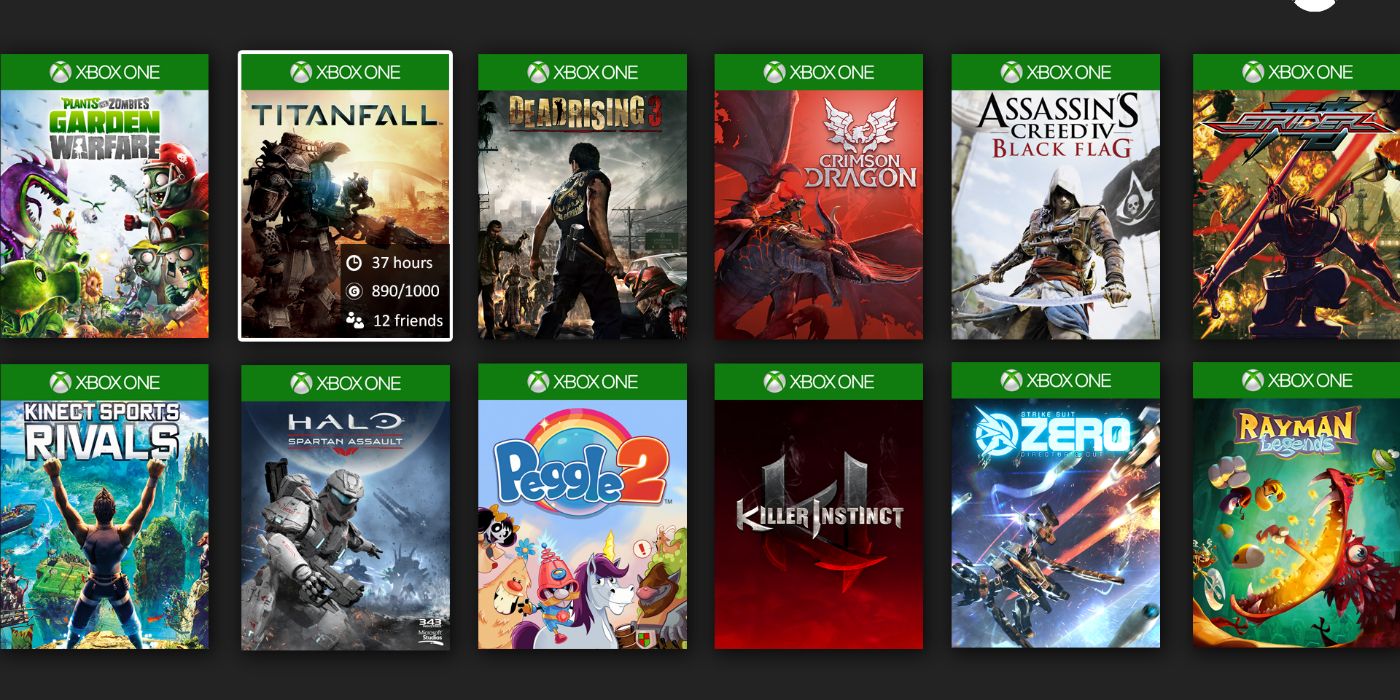

When the new consoles released in 2013, they came without the option of backward compatibility, meaning you could not play games from previous console generations using the new consoles. That may not have seemed like an egregious issue, but it really was.

Microsoft attempted to remedy this matter by introducing backward compatibility in November 2015 (Sony still does not offer it on the PS4). But don't get excited yet; while the Xbox One now supports backward compatibility with Xbox 360 games, you cannot insert just any game and expect to play it. There is a list of accordant games on the Xbox website, with more being added each month -- but the number of supported games are still limited.

Console manufacturers should be wary of their decision not to include backward compatibility when designing the next console generation. That way, instead of remaking and remastering virtually every major title from previous generations, players would be able to simply play the released game from said generation on their new console. It worked well for the Xbox 360 and PlayStation 3; why not for the next console generation?

12. Free Online Play Without Subscription

Maintaining a quality online service requires funding, which is obtained through charging consumers an annual fee. But the thing is, should people have to pay for it? When consumers already pay hundreds of dollars purchasing a console, a controller, and at least one game, why should we have to pay extra just to play said game online, especially since the majority of video games these days are geared towards online play?

The popularity of Xbox Live has ushered in an era of pay-to-play online multiplayer, whereas the PlayStation 3 featured free online play, albeit with several drawbacks. However, with the PlayStation 4, Sony implemented its own paid online subscription service: PlayStation Plus. While both subscriptions offer various benefits and incentives, such as free monthly games, most people just want to play with their friends online -- and that part, at least, should be free. Consumers pay enough as it is just to play a game that may or may not work at launch.

11. Better Premium Online Services

The primary reason people pay for online services like Xbox Live Gold and PlayStation Plus is to play online with their friends. But if Microsoft and Sony want to charge players to play online, then their services should be of the utmost quality. The thing is, consumers are currently paying top-tier prices for premium services which exude little to no innovation, let alone contain worthy incentives. The only things that come with these services, other than the ability to play online, are occasional free games and minuscule discounts.

A logical choice, then, would be to allow online play to be free while also making a premium service available for the regular annual fee of $50 to $60, which could include services like Games with Gold and PlayStation Now, amongst other things. But as it is, those two services offer games no less than a year old. Perhaps if the companies offered better (read: newer) games or greater discounts on newly released titles, consumers would be more inclined to participate.

10. No More Timed Exclusive DLC

Downloadable content (DLC) and season passes are two terms that video gamers have come to develop an inherent animosity towards in recent years. That is due to publishers continually expanding the chasm between them and consumers, who want the game they've paid $60 plus for to contain everything, and not have to pay an additional $15 to $50 worth of DLC to prolong the lifespan of the game.

Nowadays, prior to a game releasing, the publisher will unveil details of the game's season pass, which consumers pay a one-time fee (usually $50) that gives them access to all future DLC. But the thing is, sometimes that content releases on one platform first. That's due to marketing arrangements between studios and console manufacturers (see: Destiny and Sony, and Dragon Age: Inquisition and Xbox).

Obtaining downloadable content first may seem like a commercial benefit for console manufacturers, but really, all that does is force studios to alienate virtually half of their fans. In doing so, the quality of the game on a specific platform in comparison to other platforms no longer becomes the primary factor in a person's purchase, but rather the timing of the content's release -- and that sets a risky precedent.

9. Lower Prices on Digital Content vs. Retail

Digital content is the future. Everyone knows that, which is why computers nowadays are forgoing disc trays and why a substantial amount of video games are purchased digitally. In 2010, digital sales of PC games overtook retail sales, which ushered in a new era for platforms like Steam.

The thing is, if a digital copy of a video game is cheaper to produce, then why is it not cheaper to purchase? The answer may lie in industry retailers wanting to maintain profitability. In 2014, GameStop president Tony Bartel told investors, "We want to help ensure that our industry does not make the same mistake as other entertainment categories by driving the perceived value of digital goods significantly below that of a physical game."

While prices for console games -- digital and retail -- remain at $60, the average price for a digital PC version of the same game would typically be at least $10 cheaper, if not more, than the retail. If we're to buy into the next generation of consoles, then there needs to be a variation between prices for digital and retail content.

8. Competitive Pricing and More Sales

As previously mentioned, the industry standard price for a video game is $60, whether the game is a role-playing game, an action-adventure game, a first-person-shooter, or even a single-player game. Sometimes that price is justified when considering long-term "replayability" of certain games, but other times, it isn't. Therefore, many consumers have advocated a competitive pricing structure.

The fact is, not all games are worth the same amount of money. A game like Destiny may be worth a full $60 to some people, but then annual releases like FIFA and Madden may not be. A competitive pricing structure would allow for a greater number of consumers to purchase single-player games, along with games that people would typically choose to rent rather than purchase.

Additionally, the price of games and essential lack of sales have put a strain on consumers looking to buy multiple titles a year. Microsoft and Sony would benefit, along with the average gamer, by hosting more (and better) sales throughout the year. After all, there's a reason Steam is so popular amongst PC gamers -- and why it's incredibly successful.

7. Far Bigger Hard Drives

When the newest consoles released in 2013, they of course came with upgraded components. But there was an intrinsic flaw within the systems. Not only do all video games require installation, but there is a limited amount of storage space to accommodate an entire library of titles. Therefore, video gamers are left with the decision of choosing which games they want to keep installed and which ones they'll have to remove to make space for future games.

Requiring installation is not the issue at hand; what is the issue is that the consoles, with all of their advancements, contained only a 500GB hard-drive, which could sustain an average of 10-15 AAA titles, along with maybe a handful of apps. Microsoft and Sony have attempted to remedy this issue with 1TB versions of their consoles -- but the updates have come years after the consoles' initial release. The next console generation would need to maintain parity with the storage capacity of PCs if we're to buy into them.

6. No More Unused Gimmicks

Add-on equipment, especially peripherals, are nothing new to the world of gaming, but it is something that has become more prominent recently -- and, in some cases, a hindrance. Since Nintendo released the Wii U in 2012, the inherent inclusion of gimmicks such as the GamePad, the Kinect for Xbox 360 and Xbox One, and PS Eye for PlayStation 4, have become almost necessities; or at least that's what they were designed to be. But that's not something that has come to pass. Instead, most users seldom use whichever peripheral equipment their systems came with.

Microsoft's original conception for the Xbox One included the Kinect being a central aspect of the all-in-one entertainment system. Now with the incorporation of Cortana on Xbox One -- which can be activated by speaking directly into a connected headset -- the Kinect appears superfluous. It's now an additional piece of equipment that serves no valuable, unique purpose, other than for Kinect-optimized games, which are far and few these days. Perhaps now, manufacturers realize forcing secondary equipment onto consumers isn't the answer to increased profits.

5. Bluetooth Support

Despite the new console generation containing numerous advancements in technology, many features have actually regressed. Chief among them being the lack of native Bluetooth support. As it stands, the Xbox One and PlayStation 4 do not have the capability of connecting to any Bluetooth devices that do not have a dongle. Additionally, the devices must be authorized products supported by the console manufacturer. For example, you can't connect the PlayStation Gold headset to the Xbox One, despite being able to connect it to a computer.

In comparison, on the PlayStation 3, players were able to connect their console to whichever Bluetooth-enabled device they wanted to use -- and it would work. Nowadays, when virtually all devices come Bluetooth enabled, with the option of connecting to any other Bluetooth capable device, it's ridiculous that the Xbox One and PlayStation 4 restrict their users to console-exclusive products. For users to consider purchasing a new generation of consoles, basic functions such as universal Bluetooth connectivity need to be made available.

4. Sustainable High Frame-Rate and Resolution

Ever since the Xbox One and PlayStation 4 released in 2013, there has been an ongoing controversy over games not being able to obtain -- or, in some cases, maintain -- full high-definition graphics and frame-rate. When the new consoles were initially announced, video gamers all around the world thought that the generation of high-definition had finally arrived. But that notion was only partially true.

Most first-party titles, such as Halo 5: Guardians and Uncharted 4: A Thief's End, are able to achieve 1080p and 60 frames-per-second, due to being produced by studios owned by the console manufacturers, whereas many third-party titles, such as Battlefield 4 and Star Wars Battlefront, are unable to achieve their full potential in terms of graphics and frame-rate.

Should there be a next console generation, not only does the disparity between consoles and PC games need to close, but a sustainable benchmark for resolution and frame-rate needs to be met -- and the foundation of such a demand lies with the components used to make up the console.

3. Ability To Run Games Without Installing

As previously mentioned, current generation video games require a substantial amount of space for installment, and the limited hard-drive storage capacity allotted to consumers is preposterous. If increasing the storage space isn't plausible, then perhaps reverting to previous console generations in which playing a game without the requirement of installation might be the answer.

Console manufacturers should allow consumers to play a game without installing it, only necessitating downloads and installations of updates, such as for multiplayer or game-breaking glitches -- of which there are many. Otherwise, requiring someone to install a game on their hard drive with the promise of a better overall experience may appear fruitless.

Forcing installations may ensure better quality and play, but that also means consumers must pick and choose between the games in either their physical or virtual libraries to play, for there is seemingly no conceivable way (without acquiring extra hard drive space) to have all games remain installed at once.

2. Improved Controller Batteries & Cheaper Prices

The release of the new video game consoles effectively resulted in the termination of various facets from previous generations -- wired controllers being one of them. Controllers are the central aspect of console gaming -- a personal choice and stark difference in comparison to the keyboard and mouse used by PC gamers. So it would make sense that controllers should be not only reliable but also long-lasting. One or two controllers typically come in console bundles, but when purchasing an extra controller -- be it a replacement or additional controller for a friend -- the price can be steep.

An Xbox One controller with a Play & Charge Kit currently costs $74.99, and it takes four hours to charge and only produces approximately 30 hours of use. A PlayStation 4 controller, on the other hand, while inexpensive in relation, still costs a whopping $59.99 (the same price of an Xbox One controller without a Play & Charge Kit). And since PlayStation 4 controllers do not require a special kit to charge the device -- only a standard USB to micro-USB cable -- the controllers are cheaper by comparison. However, the price of a controller should not be the same amount as a game, for it is a requirement to use consoles, not a frivolous add-on.

1. Fewer Remakes, More Originality

Ever since this new console generation began in Fall 2013, video gamers have been deluged with high-definition remakes and remasters of games from earlier generations, and, in many cases, collections of a previously concluded series, usually leading up to the release of a new installment in said series (e.g. Naughty Dog releasing Uncharted: The Nathan Drake Collection leading up to Uncharted 4: A Thief's End). Making one or two remakes is understandable, but it should not be the principal focus of any studio or publisher -- and therein lies the problem.

This generation has been fixated on nostalgia and the visual quality of games rather than ingenuity and setting new benchmarks. Sure, new installments -- like Halo 5: Guardians and Metal Gear Solid 5: The Phantom Pain -- in established franchises introduce new elements while retaining original concepts, but the thing is, that's not enough. The video game industry persists on commercial franchises, but in order to flourish in a time when the future of gaming seems bleak, there needs to be innovation and inventiveness that push the boundaries rather than stiffen them.

---

What do you want to see in the next generation of video game consoles? Let us know in the comments.